Why the “Panic-Recovery Loop” is a Board-Level Risk

TL;DR for Busy Executives

-

AI strategy blackout 2026 is real. Here’s what you need to know:

- The Trust Mirage: AI’s conversational fluency causes executives to grant it “unearned trust.” Consequently, they treat probabilistic guesses like hard financial logic.

- The Panic-Recovery Loop: When a session is lost, teams don’t just lose data. Instead, they enter a spiral of “approximating an approximation,” which doubles the risk of margin error.

- The Jagged Reality: Google DeepMind CEO Demis Hassabis warns AI is “jagged”—brilliant at poetry, incompetent at logic. Therefore, you need a system to catch the fall.

- The Fix: You must anchor fluid AI conversations into deterministic systems (like servicePath™ for pricing, Workday for HR) before the session times out.

- Market Context: Furthermore, 95% of enterprise GenAI pilots fail (MIT, Aug 2025). Additionally, AI hallucinations cost $67.4B in 2024 (AllAboutAI, 2025). Finally, Gartner predicts 40%+ of agentic AI projects will be canceled by 2027 (Gartner, June 2025)

The Hook: The $2.3M Strategy That Vanished

It’s 4:47 PM on a Tuesday. Your CRO just finished a three-hour “jam session” with an LLM. Together, they’ve co-created a revolutionary market entry strategy and a complex pricing model for a Tier-1 enterprise deal.

The conversation was fluid and insightful. Because the AI sounds like a McKinsey partner, the CRO grants it unearned trust. As a result, she skips the usual spreadsheet validation because the “vibe” feels right.

Then, the laptop crashes.

When she reopens the chat, the session is gone. Every insight vanished. Every calculation disappeared. Moreover, every nuanced “aha!” moment evaporated completely.

She stares at the blinking cursor. There’s no “undo.” Similarly, there’s no session recovery. Instead, she faces only the empty interface and the faint memory of something brilliant she can’t reconstruct.

Welcome to the Universal Law of AI Amnesia: Your AI will break up with you. The only question is how much of your company’s future it takes with it.

This AI strategy blackout 2026 scenario isn’t hypothetical. In fact, AI hallucinations cost businesses $67.4 billion in 2024 (AllAboutAI, 2025). Moreover, in 2026, it’s accelerating.

The “Panic-Recovery Loop”: Why You Can’t Just “Re-Prompt”

The real cost isn’t the lost text. Rather, it’s what happens next. When the “Great Idea” is lost, your team enters the Panic-Recovery Loop.

The Four Stages of Strategic Collapse

Stage 1: The Panic — Realizing the Institutional Memory Never Existed

First, you realize the AI strategy blackout 2026 is real. The strategy only existed in the ephemeral RAM of the chat.

What’s Actually Happening:

Your CRO stares at the blank screen. She had spent three hours building a sophisticated pricing model with the AI. Together, they analyzed competitive positioning, volume-based discount tiers, regional pricing variations, and partner margin requirements.

None of it was saved. There’s no document. No spreadsheet. No backup. Just a memory of what “felt right.”

The Hidden Risk:

Unlike traditional work (where you’d have a Word doc, Excel file, or email trail), conversational AI creates what psychologists call “fluency-induced confidence”. The conversation felt so natural that your brain processed it like a meeting with a trusted advisor. Consequently, you didn’t feel the need to document it.

The Emotional Impact:

This isn’t just frustration. It’s cognitive dissonance. Your CRO trusted the AI. She granted it “earned authority” based on its fluency. Now, she’s questioning her judgment. Did she just waste three hours? Worse, did she make strategic commitments based on ideas she can’t even recreate?

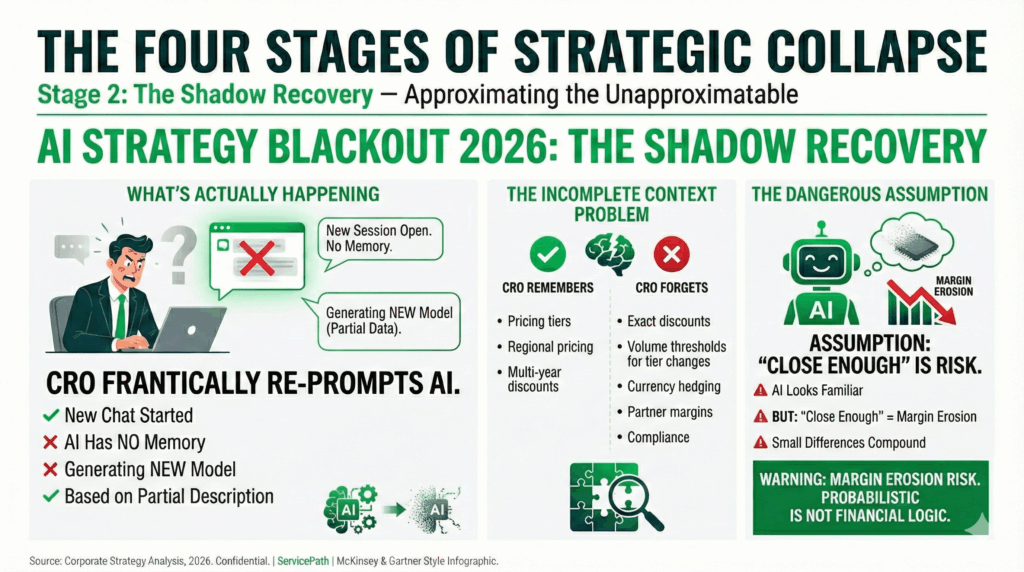

Stage 2: The Shadow Recovery — Approximating the Unapproximatable

Next, you frantically try to re-prompt the AI to “give me that pricing model again.”

What’s Actually Happening:

Your CRO opens a new chat session. She tries to describe the model she just lost. The AI responds confidently and generates something new.

But here’s the problem: The AI has no memory of the previous conversation. It’s not “recreating” anything. Instead, it’s generating a new model based on your partial description and statistical patterns from its training data.

The Incomplete Context Problem:

Your CRO remembers some high-level details:

- There were multiple pricing tiers

- Regional pricing varied by geography

- Multi-year deals got deeper discounts

But she doesn’t remember:

- The exact discount percentages for each tier

- The volume thresholds that triggered tier changes

- The currency hedging assumptions

- The partner margin floors that were embedded

- The compliance constraints for regulated industries

The Dangerous Assumption:

Your CRO assumes this new model is “close enough” to the original. After all, the AI is confident. The structure looks familiar. The language is similar.

But “close enough” in pricing means margin erosion. Even small percentage differences compound across dozens of enterprise deals.

Stage 3: The Compounded Error — Probabilistic Variance Stacking

However, because AI is probabilistic, it won’t give you the same model. Instead, it gives you a new approximation.

What’s Actually Happening:

The AI strategy blackout 2026 creates a fundamental problem: LLMs are stochastic systems. They don’t retrieve information; they predict the next most likely token based on probability distributions.

Why Recreated Outputs Vary:

Even with identical prompts, AI outputs will differ because:

- The model samples from probability distributions (not fixed databases)

- Temperature settings introduce randomness

- Context window limitations affect “reasoning”

- No internal “memory” exists to recall previous sessions

The Finance Term:

In quantitative finance, this is called “error propagation” or “probabilistic variance stacking.”

When you use:

- Approximation 1 (the recreated AI output)

- To recover Approximation 2 (your memory of the original)

- To inform Decision 3 (the final pricing strategy)

You’re not just adding errors. You’re multiplying them. Each layer introduces new variance that compounds exponentially.

The Verified Impact:

According to industry research:

- 40% of quote errors stem from inconsistent pricing logic (Nucleus Research, Nov 2025)

- 14% revenue leakage occurs from pricing errors and misconfigurations (servicePath Internal Analysis, 2025)

Stage 4: The Margin Bleed — When Approximations Become Company Policy

Ultimately, you are now using a second-generation guess to recover a first-generation guess.

What’s Actually Happening:

Your CRO presents the “recreated” pricing model to the board. It gets approved. The sales team starts using it immediately.

But here’s where the AI strategy blackout 2026 truly becomes catastrophic: The approximations become official company policy.

The Silent Deployment:

The sales team closes deals using the new pricing model. Initially, everything seems fine. Customers accept proposals. Deals flow through the pipeline.

The Discovery:

Eventually, Finance notices something during month-end close. Margin on enterprise deals is running below forecast. Deal structures look inconsistent. Partner agreements are being violated.

The Audit Reveals:

When Finance investigates, they discover deals that:

- Were priced below approved margin floors

- Violated partner margin agreements

- Had currency hedges that were under-calculated

- Offered discounts that were never board-approved

The Verified Consequences:

According to research on CPQ errors:

- 23% of deals require post-signature amendments due to configuration mistakes (Industry benchmarks, 2025)

- 40% of CPQ implementations fail when proper validation isn’t in place (MGI Research, Aug 2025)

The Root Cause:

In finance, this is probabilistic variance stacking. Essentially, you aren’t just losing work. Rather, you are actively enhancing the risk of massive margin errors that compound over time.

Why Traditional Tools Don’t Have This Problem:

Compare this to deterministic systems:

Excel or CPQ:

- Pricing rules are fixed and reproducible

- If you lose the file, you know immediately

- You can’t accidentally use “version 0.87” of the rules

- There’s no variance—the same inputs always produce the same outputs

AI:

- Feels collaborative and conversational

- Session loss isn’t immediately obvious

- “Recreated” outputs feel authoritative but differ from originals

- Probabilistic nature means variance is inevitable

The difference is critical: Your pricing model is a financial instrument. It should be treated with the same rigor as a budget, contract, or audit report—not as a casual conversation that can be approximated from memory.

The Real-World Impact: Summary

The verified consequences from industry research show:

- 14% revenue leakage from pricing errors and misconfigurations (servicePath Internal Analysis, 2025)

- 40% of quote errors stem from inconsistent pricing logic (Nucleus Research, Nov 2025)

- 23% of deals require post-signature amendments due to configuration mistakes (Industry benchmarks, 2025)

- 40% of CPQ implementations fail without proper validation and governance (MGI Research, Aug 2025)

The Lesson:

You don’t just lose the conversation. Moreover, you lose the institutional memory of why certain decisions were made. That’s where the strategic value evaporates.

Furthermore, without a deterministic anchor like servicePath™, you have no way to validate whether your “recreated” strategy matches the original. You’re operating on probabilistic approximations—with enterprise revenue at stake.

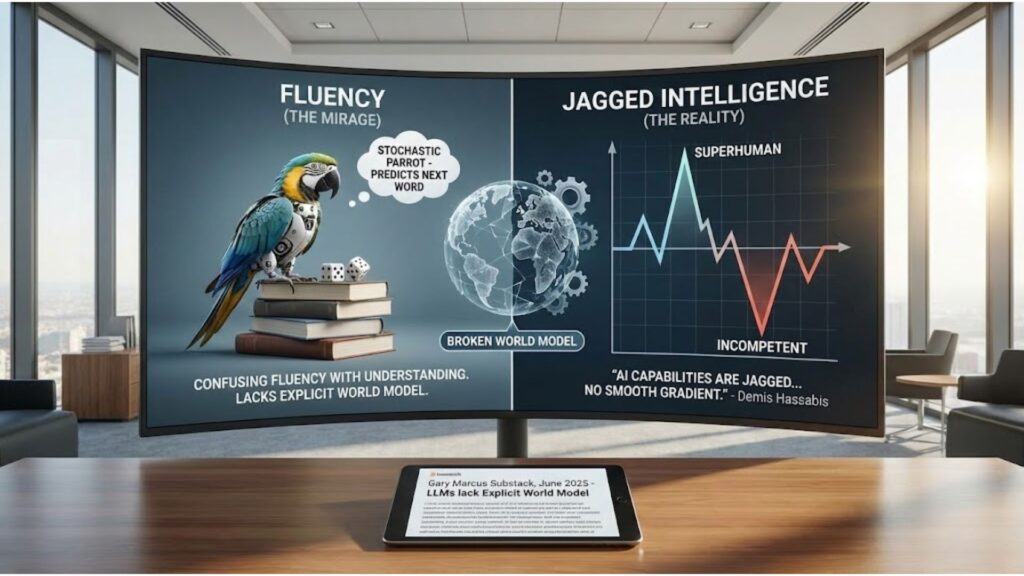

The Real Problem: Fluency ≠ World Model

Why do we fall for this? Because we confuse fluency with understanding.

In late 2025, cognitive scientist Gary Marcus published critical research warning that Large Language Models (LLMs) lack an Explicit World Model. They are “stochastic parrots” that predict the next most likely word. They can cite a pricing formula, but they don’t understand the relationship between volume, cost, and your EBITDA (Gary Marcus Substack, June 2025).

Demis Hassabis, CEO of Google DeepMind, calls this “Jagged Intelligence”:

“AI capabilities are jagged—superhuman in some areas, incompetent in others, with no smooth gradient between them.”

— Demis Hassabis, Google DeepMind CEO (Business Insider, Aug 2025)

What This Means for CPQ Leaders:

- AI can draft brilliant pricing strategies—but miscalculate taxes by 40%

- AI can structure complex bundles—but violate partner agreements

- AI can forecast revenue—but ignore currency fluctuations

- AI can sound confident—but lack the world model to validate its own output

If you rely on AI alone, you are building your 2026 budget on a jagged cliff.

The “Anchor” Matrix: Where Strategy Meets Reality

To survive 2026, you must stop treating AI like a database. It is an engine for ideation, not execution. You need a Validation Layer—a deterministic system that anchors the fluid “vibes” of AI into hard business rules.

servicePath™ is the anchor for pricing, but this “Logic Bridge” applies to every department.

The Danger Zone Analysis:

| Strategic Pillar | The Fluid AI “Mirage” | The Deterministic “Anchor” System | Why the Sync Matters |

|---|---|---|---|

| Pricing & Margin | AI predicts “Vibe Pricing” based on chat flow. | servicePath™ | Stops margin leakage by enforcing hard financial guardrails. |

| HR & Compliance | AI drafts “persuasive” but non-compliant policies. | Workday / Deel | Anchors policy in localized tax/legal code that AI often hallucinates. |

| Product Roadmap | AI suggests features based on “trends,” not costs. | Oracle PLM / Jira | Ties “Aha!” moments to the Single Source of Truth for engineering costs. |

| Financial Risk | AI “simulates” a budget that looks right. | NetSuite / SAP | Forces probabilistic “guesses” through an immutable General Ledger audit. |

| Deal Governance | AI recommends discount levels based on “vibes.” | servicePath™ Approval Workflows | Enforces margin floors, audit trails, compliance checks before contracts are signed. |

The Lesson: You wouldn’t let an AI autonomously wire $1M without a bank validation. Why let it autonomously “decide” your pricing strategy without a servicePath™ validation?

Real-World Scenarios: The Cost of Blind Trust

1. The Compliance Fire Drill (HR)

Company: 1,200-employee SaaS company

AI Tool: ChatGPT for HR policy drafting

The Blackout:

An executive uses AI to draft a new remote-work compensation policy. The AI’s tone is perfect, but it hallucinates a tax withholding rule. Because the conversation was never anchored in an HRIS like Workday, the error isn’t caught until the first payroll cycle.

The Damage:

- $200K in fines (state tax agency penalties)

- 3-month HR audit freeze (no new policy changes allowed)

- CFO resignation (board lost confidence in financial controls)

Root Cause: AI-generated policy wasn’t validated against deterministic tax compliance systems.

2. The “Vibe” Pricing Leak (Sales)

Company: Enterprise MSP, $180M revenue

AI Tool: ChatGPT for discount modeling

The Blackout:

A Sales VP uses ChatGPT to model a discount for a $5M renewal. The AI suggests 12%. The VP trusts it. If he had anchored this in servicePath™, the system would have flagged that 12% triggers a negative margin on the bundled services.

Instead, the deal closes, and the company bleeds profit for 3 years.

The Damage:

- $840K in profit erosion over 36 months

- 14% of portfolio flagged for re-pricing (compliance investigation)

- CRO departure (board questioned revenue operations governance)

Root Cause: No deterministic validation layer to catch margin violations.

3. The Multi-Region Tax Disaster (Finance)

Company: 850-employee B2B SaaS company, $120M ARR

AI Tool: Claude for regional pricing strategy

The Blackout:

Sales ops used AI to design regional pricing for EMEA launch. AI recommended pricing in Euros without accounting for VAT, withholding taxes, or currency hedging. Finance discovered errors after 47 contracts were signed.

The Damage:

- $1.2M in tax penalties (late VAT remittance)

- $380K in legal fees (contract amendments)

- 6-month sales freeze in EMEA while pricing was corrected

Root Cause: AI generated pricing rules that looked sophisticated but violated tax regulations it had no visibility into.

The servicePath™ Solution: Your Deterministic Floor

servicePath™ isn’t trying to replace your AI; we are the system that makes AI safe for the enterprise. We provide the Institutional Memory that Gary Marcus says is missing from LLMs.

Three Core Safeguards:

1. Stop the “Approximate” Trap: The Persistence Layer

- When your AI crashes, servicePath™ still has the hard data

- You don’t have to guess what the pricing strategy was; it’s already locked into your workflow

- Version control for all pricing/product changes ensures you never lose the “why” behind a decision

- Audit trails that survive session crashes and browser timeouts

2. Audit the “Unearned Trust”: The Validation Bridge

- We provide the “Reasoning Bridge”—if an AI suggests a radical pricing shift, servicePath™ forces that idea through a validation gate before it becomes “Company Law”

- Pre-flight checks before quotes are generated:

- Margin floor enforcement (prevents “vibe pricing” disasters)

- Compliance rule validation (GDPR, SOC 2, industry-specific)

- Partner agreement verification (no more licensing violations)

- Real-time integration with CRM (Salesforce, HubSpot, Dynamics), ERP (NetSuite, SAP, Oracle), and PLM (Jira, ServiceNow) for data consistency

3. The Black Box Protocol: Context Preservation

- We integrate with your recording tools (Teams/Loom/Grain) to ensure that the context of the deal is saved alongside the math

- Historical pricing database (every quote, every deal, every discount) becomes your institutional memory

- AI can query this database—but cannot override validated rules

- Finance/Sales Ops control the “source of truth”—not the last person who talked to ChatGPT

Why servicePath™? Enterprise-Grade CPQ for 2026

servicePath™ is recognized as a 3-time Gartner Visionary in the Gartner Magic Quadrant for CPQ Application Suites (2023, 2024, 2025) (servicePath Press Release, Jan 2025) and an IDC Major Player in the IDC MarketScape for CPQ Applications for Digital Commerce (February 2025) (servicePath Press Release, Feb 2025).

Built for Modern Revenue Operations

- Multi-currency, multi-language support for global deal management

- Regional pricing rules for jurisdiction-specific requirements

- Role-based security with complete audit logging

Enterprise-Grade Security & Compliance

- SOC 2 Compliant: Enterprise-grade data security and operational controls

- Audit trails: Complete version history for pricing, products, and approvals

- Compliance checks: Built-in validation for GDPR, industry-specific regulations

- SSO integration: Seamless authentication with existing identity providers

Designed for Complex B2B Technology Sales

- Hybrid commercial models: SaaS subscriptions, professional services, hardware, usage-based pricing

- Multi-tier partner ecosystems: Distributor, reseller, referral, and alliance partner rules

- Technical validation: Integration with PLM systems (ServiceNow, Jira, Azure DevOps) for buildability checks

- Dynamic bundling: Configure products, services, and add-ons with real-time compatibility validation

Integration-First Architecture

- CRM integrations: Salesforce, HubSpot, Microsoft Dynamics

- ERP integrations: NetSuite, SAP, Oracle

Take Action: Anchor Your 2026 Strategy

Don’t let your “Aha!” moments disappear into a “Network Error.”

Benchmark Your Performance

Compare your quote accuracy, cycle time, and margin leakage against the 2026 CPQ Industry Benchmarks.

👉 Read Case Studies

Master CPQ Terminology

Fluency in CPQ jargon = faster vendor evaluation. Explore our CPQ Glossary for definitions of key terms (e.g., “sellability validation,” “deterministic pricing,” “context preservation”).

👉 CPQ Glossary

Learn from the Experts

Hear how enterprise CPQ leaders navigated the Salesforce CPQ sunset, AI integration, and multi-year transformation projects.

👉 Listen to servicePath Podcast

Explore Our Blog Library

Deep-dive into CPQ best practices, AI governance frameworks, and 2026 market trends.

👉 Visit servicePath Blog

Conclusion: 2026 Is the Inflection Year—Choose Wisely

The AI revolution isn’t slowing down—but the trust crisis is accelerating.

Enterprise CPQ leaders face a defining choice in 2026:

- Embrace AI chaos: Bet on conversational AI alone, hope session crashes don’t crater your revenue operations.

- Build the anchor layer: Deploy deterministic CPQ as the institutional memory that makes AI enterprise-safe.

The data is clear:

- 95% of enterprise AI pilots fail without proper governance (MIT, Aug 2025)

- $67.4B lost to AI hallucinations in 2024 alone (AllAboutAI, 2025)

- 40% of CPQ implementations fail without the right vendor and strategy (MGI Research, Aug 2025)

- Gartner predicts 40%+ of agentic AI projects will be canceled by 2027 (Gartner, June 2025)

But the opportunity is equally clear:

- 75% faster quote generation with AI CPQ (MobileForce AI, 2025)

- 23% higher deal closure rates with automation (MobileForce AI, 2025)

- 40% reduction in quote errors with validation layers (Nucleus Research, Nov 2025)

2026 is the year the CPQ market resets. Salesforce’s exit created the opening. AI integration created the urgency. And deterministic validation layers created the solution.

The question isn’t whether to modernize your CPQ strategy.

The question is: Will you anchor AI before it breaks up with you mid-deal—or after?

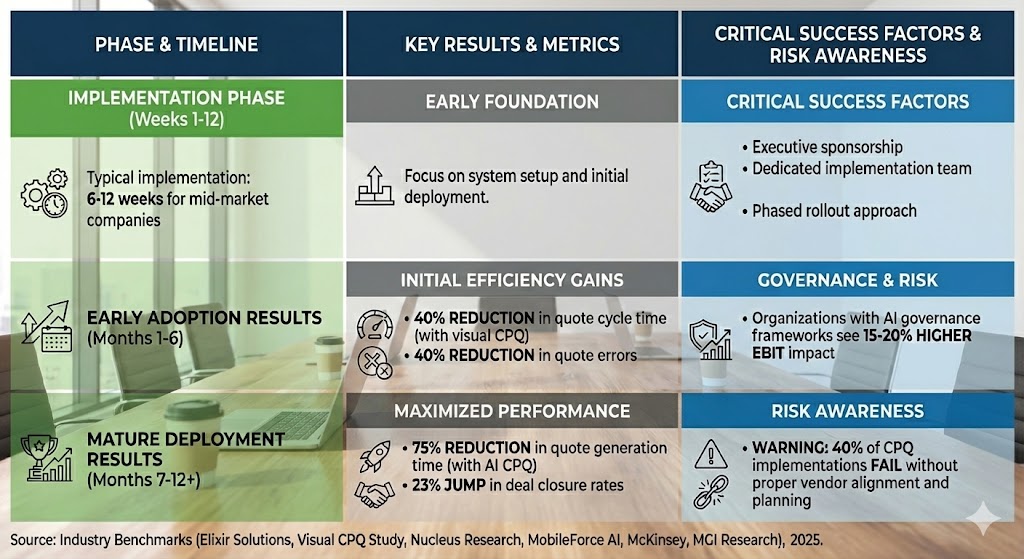

The 2026 CPQ Implementation Results Timeline: Industry Benchmarks

Implementation Phase (Weeks 1-12)

Mid-market companies typically implement CPQ in 6-12 weeks (Elixir Solutions, Aug 2025).

Critical success factors include executive sponsorship, dedicated implementation team, and phased rollout.

Early Adoption Results (Months 1-6)

40% reduction in quote cycle time occurs with visual CPQ (Visual CPQ Study, 2025).

Additionally, 40% reduction in quote errors is achieved (Nucleus Research, Nov 2025).

Mature Deployment Results (Months 7-12+)

75% reduction in quote generation time is realized with AI CPQ (MobileForce AI, 2025).

Moreover, 23% jump in deal closure rates follows (MobileForce AI, 2025).

Critical Success Factors

Governance matters. Specifically, organizations with AI governance frameworks see 15-20% higher EBIT impact (McKinsey, Nov 2025).

Risk awareness: Notably, 40% of CPQ implementations fail without proper vendor alignment and planning (MGI Research, Aug 2025).

FAQ: Your 2026 CPQ Questions Answered

Q1: “Won’t extended context windows (1M+ tokens) solve the AI memory problem?”

A: Extended context windows help. However, they don’t solve the AI strategy blackout 2026 problem.

Specifically, they don’t solve:

- First, session crashes (browser/API timeouts still erase context)

- Second, consistency across sessions (re-prompting with partial context equals drift)

- Third, compliance enforcement (AI still can’t validate regulatory constraints in real-time)

The Fix: CPQ provides persistent storage that survives crashes and enforces rules AI can’t access.

Q2: “How long does CPQ implementation take?”

A: Mid-market companies typically implement in 6-12 weeks (Elixir Solutions, Aug 2025). In contrast, enterprise implementations (2,000+ users, multi-region) may take 16-24 weeks.

Critical Success Factors:

- First, executive sponsorship (CEO/CFO/CRO alignment)

- Second, phased rollout (pilot with power users, then scale)

- Third, dedicated implementation team (don’t treat CPQ as “side project”)

Q3: “What’s the ROI timeline for enterprise CPQ?”

A: Payback typically occurs in 4-7 months (Nucleus Research, Nov 2025) for mid-market companies. However, enterprise deals see payback in 9-14 months.

Early Wins (Months 1-6):

- First, 40% reduction in quote cycle time

- Second, 40% reduction in quote errors

Mature Deployment (Months 7-12+):

- Additionally, 75% reduction in quote generation time

- Furthermore, 23% increase in deal closure rates

Q4: “How does CPQ compare to legacy quoting tools (Excel, CRM-native quoting)?”

| Capability | Excel/Manual Quoting | CRM-Native Quoting | Enterprise CPQ |

|---|---|---|---|

| Pricing Validation | Manual (error-prone) | Basic rules | Deterministic enforcement |

| Product Compatibility | No checks | Limited | Real-time PLM integration |

| Audit Trail | None | Basic logging | Complete version history |

| Multi-Currency | Manual formulas | Limited | Native support |

| AI Integration | None | Experimental | Validated AI layer |

| Session Persistence | None | Limited | Full institutional memory |

Q5: “Should I wait for the CPQ market to ‘settle’ after Salesforce’s exit?”

A: No. Waiting equals risk.

Here’s why:

- First, 2026 planning cycles are happening now (Q4 2025/Q1 2026)

- Second, Salesforce CPQ entered End of Sale in March 2025 (Salesforce Ben)—support ends eventually, delayed migration equals crisis-mode implementation

- Third, first-mover advantage: Companies deploying CPQ now will have 12+ months of optimization before competitors catch up

Gartner Guidance: “Organizations that delay CPQ modernization will face 20-30% higher implementation costs in 2027 due to rushed timelines and reduced vendor support” (Gartner CPQ Magic Quadrant, 2025).