Unpuzzling Enterprise AI Adoption | Daniel Kube – servicePath™

Disambiguate enterprise AI myths, master LLM renewal risk, and explore AI-native best practices with servicePath™ CEO Daniel Kube.

Disambiguate: The Word of the Day

Let’s kick things off with a term I’ve grown to appreciate, thanks to the verbose brilliance of Chamath Palihapitiya on the All-In Podcast: Disambiguate.

What does it mean? Simply put, it means to remove ambiguity. To clarify. To take something that feels a bit hazy, perhaps even a touch mystical, and shine a big, bright spotlight on it until it makes undeniable sense. And if there’s one area screaming for a good disambiguation right now, it’s Artificial Intelligence (AI) adoption in the enterprise.

Seriously, if I had a nickel for every time I heard someone say, “AI adoption is hard,” I’d probably be competing with Chamath for ‘richest VC podcaster.’ There’s this pervasive, almost mythical belief that bringing AI into your organization is like trying to tame a dragon—a monumental, perilous, and utterly exhausting endeavor reserved for the tech titans with bottomless pockets and an army of PhDs.

But here’s the truth: AI adoption doesn’t have to be an insurmountable challenge. By applying established change management principles and fostering a culture of continuous learning, organizations can seamlessly integrate AI into their operations and reap immediate benefits.

The Myth of Hard Adoption: It’s Just Change Management

Let’s really disambiguate this perceived difficulty. The feeling that AI adoption is an insurmountable mountain often comes from focusing solely on the whiz-bang technology itself, rather than the tried-and-true organizational dynamics. Successful AI integration hinges on principles you already understand and, dare I say, probably excel at:

- Clear Vision and Communication:

Just like rolling out a new sales methodology or an updated ERP system, you need to articulate why AI is important for your organization. What specific headaches will it cure? What bold new opportunities will it unlock? Communicate this vision relentlessly and transparently, tackling concerns head-on. Don’t let the fear of the unknown fester. - Leadership Buy-in and Sponsorship:

AI needs champions, not just cheerleaders. When leaders actively participate, demonstrate their commitment, and, yes, even use AI themselves, it signals to the entire organization that this is a priority, not just some fleeting tech experiment. It’s the difference between a mandated memo and genuine enthusiasm. - Employee Engagement and Empowerment:

This is absolutely critical. The “robots are taking our jobs!” narrative is a common hurdle. Instead of viewing AI as a replacement, position it as an augmentation—a powerful co-pilot designed to empower your employees, free them from mundane, soul-crushing tasks, and enable them to focus on higher-value, more creative work. Provide training, provide support, and make them part of the solution, not victims of change. - Pilot Programs and Iteration:

You don’t have to boil the ocean or roll out AI across the entire enterprise on day one. Start small, with well-defined pilot projects that demonstrate tangible value. Learn from these initial successes (and yes, gracefully learn from the inevitable little stumbles!), iterate, and then expand. Think of it as a low-risk, high-reward experiment.

The instant gratification of AI—truly seeing immediate improvements in efficiency, accuracy, or insights—acts as a powerful accelerant to this change management process. It creates positive feedback loops that can quickly overcome initial skepticism and turn cynics into champions.

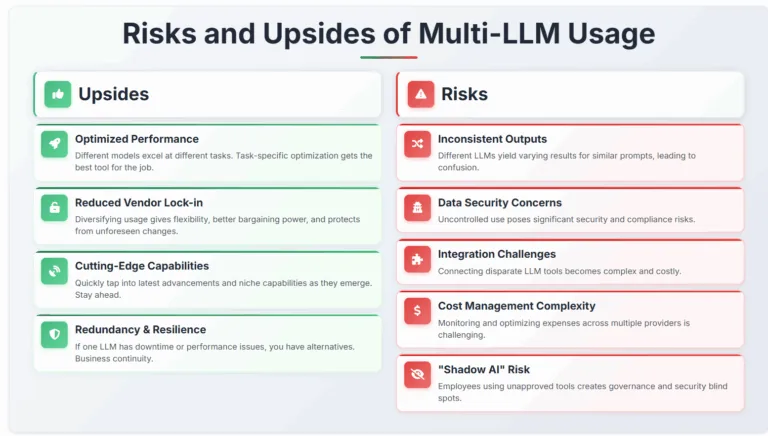

Upsides:

-

Optimized Performance:

Let’s be honest, no single LLM is perfect for everything. Different models excel at different tasks (e.g., code generation, creative writing, nuanced data analysis). Using multiple allows for task-specific optimization, getting the best tool for the job. -

Reduced Vendor Lock-in:

Diversifying your LLM usage mitigates dependence on a single provider. This gives you flexibility, better bargaining power, and protects you from unforeseen changes in pricing or service. It’s just good business hygiene. -

Access to Cutting-Edge Capabilities:

The LLM landscape is evolving at warp speed. By leveraging multiple models, you can more quickly tap into the latest advancements and niche capabilities as they emerge. Stay ahead, not just afloat. -

Redundancy and Resilience:

If one LLM experiences downtime, rate limits, or performance hiccups (and trust me, they do), you have alternatives. Business continuity, baby!

Risks of Multi-LLM Usage:

-

Inconsistent Outputs: Different LLMs may yield varying results for similar prompts, leading to confusion.

-

Data Security Concerns: Uncontrolled use of multiple LLMs can pose significant security and compliance risks.

-

Integration Challenges: Connecting disparate LLM tools can become a complex and costly endeavor.

-

Cost Management Complexity: Monitoring and optimizing expenses across multiple LLM providers can be challenging.

-

Lack of Standardization: Ah, the CEO’s recurring nightmare. Without a standardized approach, you can face: “Shadow AI”– Employees using personal or unapproved LLM tools. While it shows initiative, it creates massive governance and security blind spots. It’s like a rogue IT department, but with AI.

This analogy underscores the pervasive impact AI will have across industries.

“While each AI system showed impressive capabilities in its specific domain, they revealed a universal challenge: the lack of what we call ‘collaborative intelligence.'” — Pascal Bornet, Agentic Artificial Intelligence

Is Lack of Standardization a Risk or an Opportunity?

This is the billion-dollar question for leadership. And the answer, in true nuanced fashion, is both.

Risk:

As outlined, unbridled, non-standardized LLM usage presents significant risks in terms of data security, compliance, consistency, and cost. It can fragment efforts and undermine a cohesive AI strategy, leading to inefficiencies and potential liabilities.

Opportunity

The very fact that individuals are experimenting with multiple LLMs indicates a strong, organic appetite for AI and a recognition of its intrinsic value within your ranks. This ground-up adoption is a powerful signal! The opportunity lies in channeling this raw enthusiasm into a structured, governed approach that leverages the benefits of diversity while smartly mitigating the risks. It’s about evolving from ad-hoc experimentation to strategic, managed multi-LLM utilization. Think of it as a well-orchestrated symphony, not a chaotic garage band.

“AI adoption in the enterprise hasn’t hit a wall—it’s following the usual timeline.” — Allie K. Miller

Best Practices for Navigating Rapid AI Change and Multi-LLM Environments

1.Develop a Living AI Strategy and Governance Framework

Regularly update policies to reflect technological advancements and organizational needs:

- Approved LLMs and Use Cases: Define which LLMs are approved for what types of data and tasks. Be clear.

- Data Handling and Security Protocols: Crystal-clear guidelines on what data can (and cannot) be shared with LLMs. This protects your IP and your customers.

- Responsible AI Principles: Address bias, fairness, transparency, and accountability. It’s not just about what it can do, but what it should do.

- Performance Metrics: How will you measure the effectiveness and ROI of your LLM investments? No “trust me, bro” here.

- Clear Accountability: Who owns this? Who is responsible for managing and overseeing AI initiatives?

2.Centralize LLM Access and Management: Implement enterprise-grade platforms for centralized oversight, ensuring security and cost-effectiveness.

3.Invest in AI Literacy and Training: Equip employees with the skills to utilize AI tools effectively and responsibly.

4.Foster a Culture of Experimentation within Guardrails: Encourage innovation while maintaining compliance and security standards.

5.Monitor and Adapt Continuously: Stay abreast of AI developments and adjust strategies accordingly.

Partnering for the AI-Native Future: Selecting Your Allies in a High-Rate-of-Change World

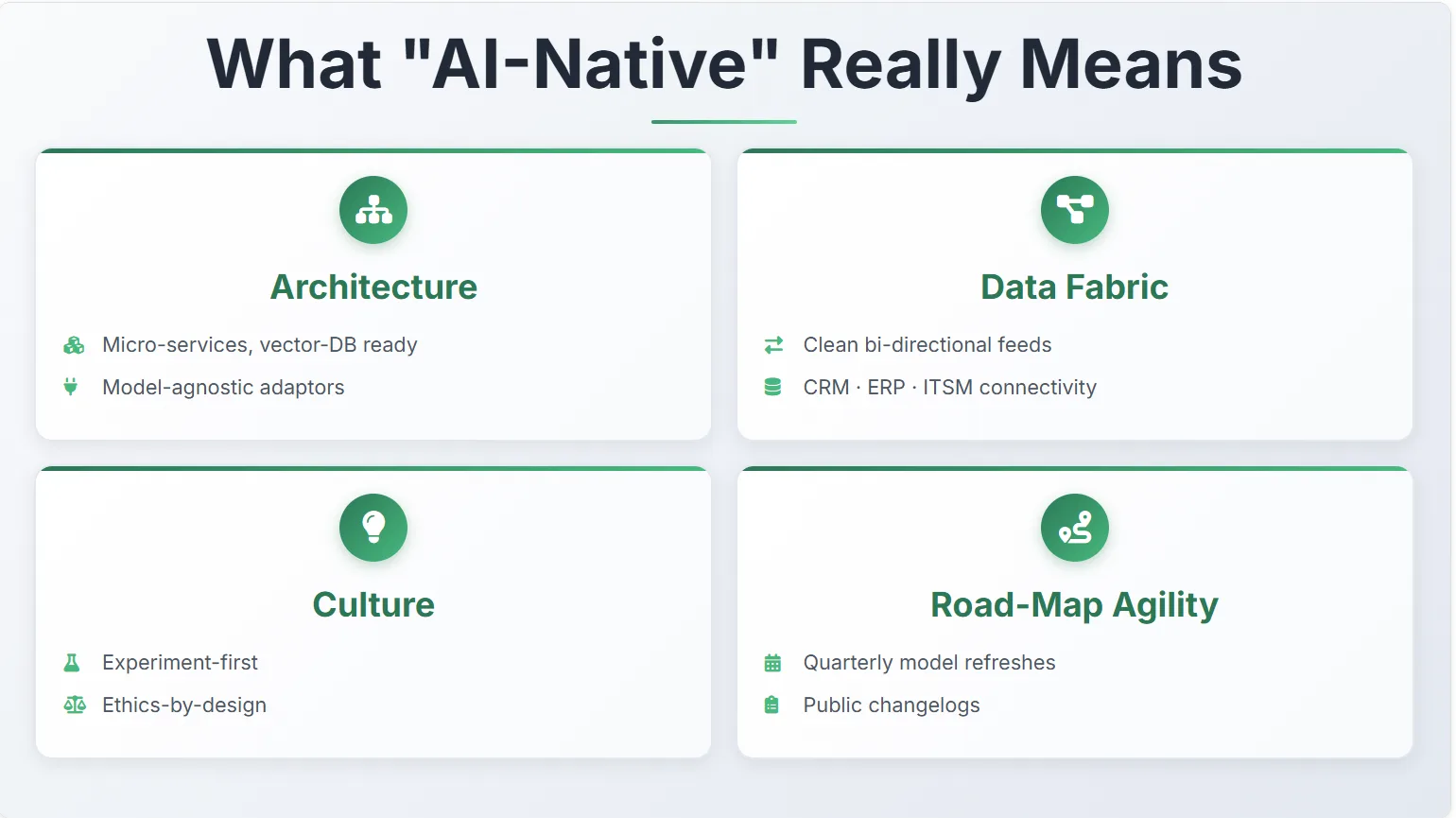

From a Tech Perspective: An AI-native platform is designed with the assumption that AI will be central to its functionality and will continuously evolve. This means:

-

Adaptability: Its architecture is inherently flexible, allowing for seamless integration of new models, frameworks, and capabilities as they emerge. It’s not a bolted-on solution that will require a complete overhaul with every major AI advancement. It’s built for tomorrow, not just today.

-

Scalability: It’s built to handle increasing data volumes and computational demands as your AI usage grows and new use cases emerge. No sudden performance cliffs.

-

Continuous Learning: The system itself is designed to learn and improve over time, not just in its AI models but in its underlying infrastructure and processes. It gets smarter as you use it.

-

Data Flow: Data pipelines are optimized for AI, ensuring clean, real-time data flow to power intelligent decision-making across the platform. Garbage in, garbage out applies doubly here.

From a Team Perspective: An AI-native team embraces AI not just as a tool, but as a fundamental shift in how work is done. This implies:

-

AI Fluency: Team members possess a strong understanding of AI’s capabilities and limitations, moving beyond basic digital literacy to genuine AI literacy. They speak the language.

-

Problem-First Approach: They intuitively look for problems that AI can uniquely solve, rather than trying to force-fit AI into existing processes. It’s about innovation, not just automation.

-

Experimentation & Iteration: There’s a culture of continuous testing, learning, and refining AI solutions, with a comfort in rapid prototyping and adaptation. They aren’t afraid to fail fast and learn faster.

-

Human-AI Collaboration: They focus on designing workflows where humans and AI augment each other’s strengths, leading to higher-value outcomes. It’s about superpowers for your people.

-

Ethical Awareness: The team is deeply ingrained with responsible AI principles, ensuring fairness, transparency, and accountability in their AI deployments. This isn’t optional; it’s foundational.

If this walkthrough has helped disambiguate the real-world steps to enterprise-grade AI, imagine what we can achieve together.

servicePath™—born in the cloud and engineered for effortless model-swap flexibility—turns your CPQ+ stack into the clean, real-time data fabric every modern LLM craves.

-

No-code integration with Workato, CRMs, ERPs, ITSM, and analytics platforms

-

Pilot price-optimization or proposal-drafting models this quarter

-

Drop in the next-gen version the moment it ships without rewriting a single workflow

We live what we preach: an architecture built to evolve at AI speed, a culture that prototypes and iterates alongside our customers, and governance hooks that satisfy even the most exacting risk teams.

When you evaluate partners, probe their roadmaps, refresh cadence, and AI fluency—because the right ally isn’t just offering software but acting as a strategic co-pilot who keeps you thriving in a perpetually evolving landscape.

Final Thoughts

Disambiguating AI adoption reveals that it’s less about technological hurdles and more about strategic change management. By embracing a proactive, governed, and people-centric approach, organizations can demystify AI and unlock its immense potential, propelling them into an exciting future.

Thought Leaders to Follow

For deeper insights into AI adoption and strategy:

-

Andrew Ng: Co-founder of Google Brain and Coursera, Ng emphasizes practical AI applications and education.

Andrew Ng: Why AI Is the New Electricity -

Ethan Mollick: Wharton professor and author of Co-Intelligence, Mollick explores human-AI collaboration.

Co-Intelligence: Living and Working with AI -

Pascal Bornet: Author of Agentic Artificial Intelligence, Bornet focuses on intelligent automation and AI agents.

Agentic Artificial Intelligence -

Allie K. Miller: CEO of Open Machine, Miller advises on AI strategy and implementation in enterprises.

Allie K. Miller on Enterprise AI -

Boston Consulting Group (BCG): Provides extensive research and frameworks on enterprise AI strategy and governance.

When Companies Struggle to Adopt AI, CEOs Must Step Up -

McKinsey & Company: Offers in-depth reports on AI in the workplace and its transformative potential.

AI in the Workplace: A Report for 2025

Let’s Turn Clarity into Action

If the ideas here resonate, I’d love to keep the momentum going. You can:

- Talk strategy with a servicePath™ Sales Architect. Book a 30-minute consult to map your quote-to-cash data flows to the use-cases that matter most.

- Explore deeper content. Our resource centre houses technical white-papers, sector-specific case studies, and how-to playbooks.

- Stay ahead with fresh insights. The servicePath™ blog publishes weekly field notes on LLM governance, and revenue-ops automation.

Visit us at www.servicePath™.co to schedule a call, download the materials that interest you, and subscribe for ongoing updates—no spam, just actionable intel.

Citations:

- Pascal Bornet, Agentic Artificial Intelligence: Harnessing AI Agents to Reinvent Business, Work and Life, 2024.

Amazon - Allie K. Miller, “Allie K. Miller on why enterprise AI isn’t failing — it’s right on schedule,” Insight Partners, 2025.

Insight Partners - Andrew Ng, “Why AI Is the New Electricity,” Stanford Graduate School of Business, 2017.

Stanford GSB - Ethan Mollick, Co-Intelligence: Living and Working with AI, 2023.

Amazon - “When Companies Struggle to Adopt AI, CEOs Must Step Up,” BCG, 2025.

BCG - “AI in the workplace: A report for 2025,” McKinsey & Company, 2025.

McKinsey & Company - servicePath™, “CPQ+ Product Overview” servicePath™.co

- servicePath™, “Guided Selling Glossary” servicePath™.co

- broadn – the AI native CPQ platform , “The Case for an AI-Native CPQ Solution” broadn.io

- servicePath™, “Integration Hub with Workato” servicePath™.co

You may be interested in these articles next

Ground Truth Is the New Moat | CPQ AI Risk Framework 2026

servicePath™ Recognized as 2026 CPQ Data Quadrant Champion by Info-Tech — Top-Rated for Business Value and Governance by Enterprise Users